OpenAI has introduced a generative AI model for text-to-video conversion called Sora which, when compared to existing competitors, leaves you speechless with the quality and length of the videos generated.

From distorted 16-second videos to near-perfect 60-second videos

The text-to-video model creates movies from text descriptions that the user provides via a prompt, in the same way that text-to-image models create images.

Some of the most popular image generators include Midjourney, Stable Diffusion, and DALL-E, the latter from OpenAI and can also be used through Microsoft's ChatGPT Plus and Copilot prompts.

In the field of video, things become more complicated because the sequence of frames generated by vector interpretation must maintain a certain coherence between different frames: a property that is not easy to respect and which In most models and services available to date, this often results in distortion of subjects in one or more frames of the video.

Although Google's Lumiere and Pika have emerged recently, the most popular and up-to-date text-to-video generator at the moment is Runway which can also create videos from a prompt and a starting image, as well as apply paint techniques to pan and animate subjects and the entire scene. But Runway can produce videos up to 15 seconds longOr 16 seconds if you're using the Gen-2 model's video expansion option introduced in March of last year.

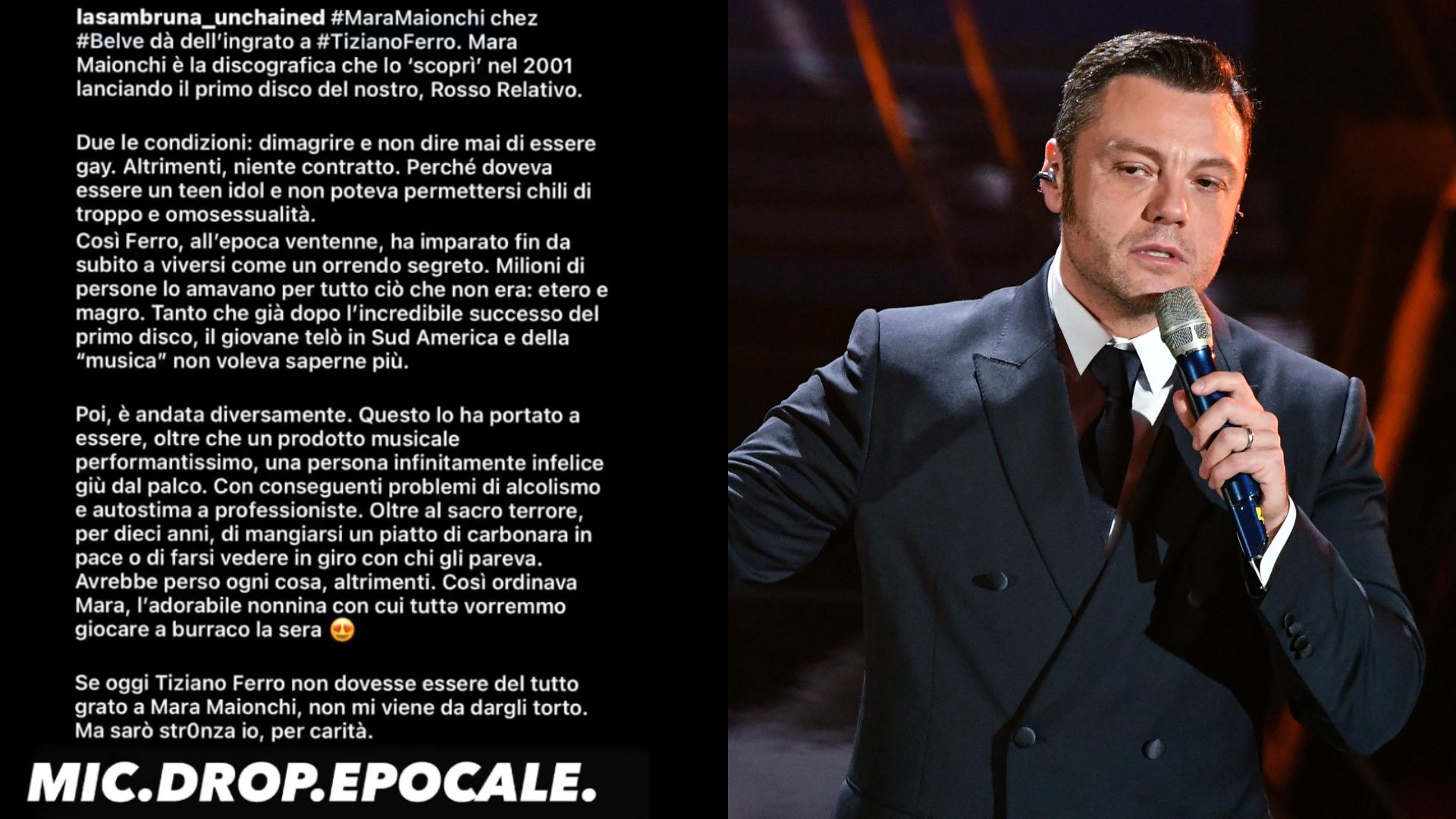

OpenAI's Sora can create 60-second videos of amazing quality: Just look at the examples shown by the company. In particular, the shots of the man in the snow are really impressive not only in terms of frame consistency but also in terms of the quality of the cartoon subject's face.

certainly The videos published by OpenAI are some of the best examplesbut if we take one of the Runway videos in which the quality of the Gen-2 model was announced for comparison, we are on two completely different planets, where OpenAI is undoubtedly the most advanced.

OpenAI says Sora is capable of creating complex scenes with multiple charactersSpecific types of movement and precise details of the subject and background. Additionally, Sora can create multiple takes in a single video, and is able to accurately maintain characters and visual style. It can also produce a video starting from an image

However, there are limits. Sora may have difficulty accurately simulating the physics of a complex scene and may not understand specific instances of cause and effect. OpenAI gives an example of this A cookie being bitten by someone where the cookie in the next scene is intact.

Sora is currently only available to a “red team” identified by OpenAI that is evaluating its model Identify potential risks and harm resulting from its use.

For example, having a model capable of generating scenes with these results can be an effective tool for creating realistic, deepfake videos. In early February, OpenAI announced the introduction of C2PA digital watermarking for images created with DALL-E.

The Alliance for Content Provenance and Authenticity (C2PA) is a group made up of companies like Adobe and Microsoft that offers a system of digital watermarks located within the same images created using artificial intelligence, so they can be recognized as such. OpenAI plans to offer C2PA watermarks for Sora videos as well.

“Incurable internet trailblazer. Troublemaker. Explorer. Professional pop culture nerd.”

More Stories

How to Fix Damaged External Hard Drive in Few Minutes: Few Steps to Avoid Losing Any Data

Honda cbr650R: Tests and responses without a clutch leave everyone in awe

Endless Ocean Luminous: Not exactly rave reviews for the Nintendo Switch exclusive